The skills your people need in the age of AI are hidden in plain sight

Is AI transformation a technology problem? Most organizations are treating it that way.

They’re buying tools, launching pilots, and measuring adoption rates. Leadership gets a dashboard showing how many employees completed an AI literacy module, how many tools have been deployed, and how many workflows have been “touched” by automation. But does this equate transformation?

It appears not.

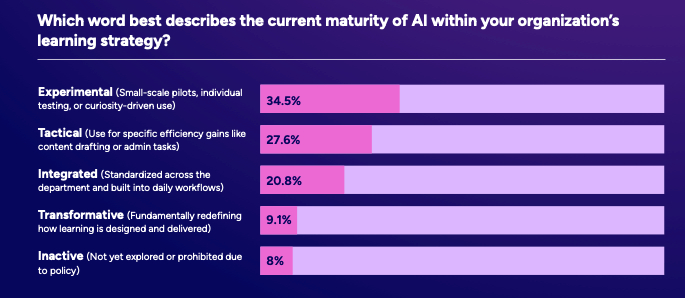

In our recent report, the AI Readiness Gap: The 2026 Enterprise Learning Wake up Call, we found that only 9% of organizations have used AI to genuinely redefine how work gets done. Meanwhile, 35% are still stuck in experimentation mode, running pilots that don’t scale, investing in tools that don’t stick, and wondering why AI hasn’t delivered the productivity leap they were promised.

Well, that’s because we’re confusing activity with progress.

Only 9% of organizations have used AI to redefine their workflows. 35% remain in the experimentation stage.

The pilot trap

You know how it goes: A tool gets deployed. A training module gets assigned. Completion rates climb. And then… not much changes. The tool gets used occasionally, in ways that are shallow and safe, and the workflows it was supposed to transform stay largely intact.

Organizations have become very good at measuring AI exposure; we know who has access, who has completed training, who has logged in. What they’re not measuring is whether any of it has changed how decisions get made, how work flows, or how people perform in their roles.

Without a skills strategy that connects AI tools to actual job outcomes, experimentation is all you get. And experimentation without direction is just expensive stagnation with better branding.

The skills hiding in plain sight

Here’s where the conversation needs to shift. When organizations ask “what skills do our people need for AI?” they almost always reach for technical answers like prompt engineering, tool proficiency, data literacy. And while these matter, they’re not what’s getting in the way of workplace transformation.

We are in the way. Our skills, to put it plainly.

The capabilities that can squeeze the juice out of AI are simply: human. Analytical thinking. Critical reasoning. Sound judgment. Clear communication. The ability to interrogate an output, recognize when something is wrong, ask a better question, and make a call that a model can’t.

You may know them as soft skills. Organizations have always valued them, but rarely developed them systematically. They’ve been treated as innate qualities (things people either have or don’t) but not as capabilities that can be built with the right learning design.

Want the full picture on what skills actually matter in the age of AI, and why most organizations are measuring the wrong things? The AI Readiness Gap report has the data. Read it here.

Why these skills are so hard to see

If human skills are the missing piece, why aren’t organizations investing in them more deliberately? The honest answer is that most organizations don’t have a clear picture of the skills their people actually have today.

And can we blame them?

Skills data tends to be scattered, self-reported, or completely absent. Job descriptions don’t capture real capability. Performance reviews capture outcomes, not the underlying competencies that drove them. And learning completion data tells you what someone sat through, not what they can actually do.

You can’t build toward AI readiness if you can’t see where you’re starting from.

Making skills visible, mapping them to specific roles, workflows, and business outcomes, is the start, the catalyst, if you will, to AI transformation, but most organizations skip this step entirely. They go straight to deployment without ever establishing a baseline. It’s no wonder nothing changes.

Or even feels relevant to the user.

When organizations don’t have a clear picture of what skills each role actually needs, training becomes generic by default. People nod away during training as they’re told AI is important, but how is it applicable to their roles? Who knows.

What a skills-first AI strategy actually looks like

The 9% of organizations that have moved from AI experimentation to AI transformation share something in common. They didn’t start with tools. They started with the question: What does this role need to look like in a world where AI is part of how work gets done? And from there, they worked backward to identify the human capabilities that needed to be in place before AI could deliver real value.

Is this a complicated idea? Not really, but it does require treating skills visibility as an organizational priority, not an L&D side project. It requires connecting learning to business outcomes in a way that executives can see and measure. And it requires being honest about the fact that AI literacy is not the same thing as AI fluency.

The question that follows

Organizations that get this right will stop asking whether their people have completed AI training and instead measure whether they can actually apply it with confidence in their roles.

Is this what we’re seeing today? More than half of learners say that it’s not the case. The data suggests most organizations have no idea how wide the relevance gap has become. We’ll unpack that in the next post.

The AI Readiness Gap: The 2026 Enterprise Learning Wake-Up Call reveals why 91% of organizations are stuck, and what the 9% are doing differently. Download the full report.